When it comes to Node.js design patterns, microservices are useful patterns that can improve the flexibility and reliability of applications. However, microservice architectures must be carefully designed, as they can create roadblocks and inefficiencies that reduce team velocity.

This is especially true for enterprise level applications and systems.

In order to be successful with microservices, one needs to be aware of their pitfalls and drawbacks. This article will explore five different ways that using microservices can hinder development, and examine solutions that can reduce unnecessary effort.

Here are the five pitfalls of microservices we feel are the most impactful:

- Delaying feedback between teams

- Cross-services querying

- Code duplication

- Over-engineering

- Breaking changes

This article will also explore methods of tackling the problems associated with microservices and balancing microservice trade-offs. We will answer the following questions:

- How exactly can feedback between inception and integration be reduced?

- How do you ensure that data spread across multiple services can be queried in an efficient manner?

- How can you prevent code duplication when using microservices?

- How many services are too many?

- How can you ensure that a change to one service doesn’t break other services?

1. Delaying Feedback Between Teams

Problem

Part of maintaining velocity for NodeJS development is keeping the amount of time that elapses between inception and integration as small as possible. Dev teams must be able to quickly and efficiently ascertain how changing one system component will impact the rest of the system, so quick feedback is paramount.

Unfortunately, microservice architectures often create delays between implementation of a change and the arrival of feedback, and it’s impossible to maintain quality performance without this critical feedback. How can feedback between inception and integration be reduced?

Solution

1) Doc-Driven Development

One method of reducing the feedback loop between inception and integration is letting the documentation drive the development of applications. Services can be documented before development begins, reducing much of the back-and-forth that occurs by providing a reliable blueprint for developers to follow. The services can be documented using tools like OpenAPI.

2) Mock Services

OpenAPI can also be used to create mock services that emulate the functions of a real REST API, letting you test how existing services will respond to changes. Clients can interact with exposed fixtures to test integrations with existing services, allowing service teams to evaluate the success of planned changes.

Any iterative changes that need to happen can be carried out at the OpenAPI level, negating the need to make changes to the functional code until they have been tested. Other services and dev teams can interact with the simulated output to determine how the change might impact the system.

2. Cross-Service Querying

Problem

When creating microservice architectures for enterprise applications, you may run into a situation where a data table is distributed across multiple databases/services. In these situations, joining tables won’t work as they exist in separate databases. How do you ensure that data spread across multiple services can be queried in an efficient manner?

Solution

In order to properly query data distributed, an aggregation system like Elasticsearch can be used. Aggregation systems let you query data spread across multiple services, and the aggregation can center on a single microservice or on multiple services.

1) Single Service Aggregation

If the size of the total dataset is fairly small, it's possible to aggregate the data by centering on the first microservice and having it call the second service. This approach doesn’t work if the data is large enough to require pagination, however.

2) Multiple Service Aggregation

In such cases, it’s better to spread the aggregation tool across multiple services. The advantage of distributing aggregation across multiple services is that read-only copies of the data can successfully be stored in one place, allowing complex queries to be run regardless of pagination.

3. Code Duplication

Problem

One of the fundamental ideas of engineering, in NodeJS or other frameworks, is not to repeat code unnecessarily. Unfortunately, the creation of microservices can end up creating a lot of duplicated code. Different microservices may have functions that refer to common files or models, validate with common functions, or authorize with common functions.

Avoiding code duplication helps keep microservices consistent, minimizing situations where other teams are surprised by changes at runtime. Beyond this, preventing code duplication helps keep applications small, manageable, and faster. So how can you prevent code duplication when using microservices?

Solution

One of the best ways to reduce code duplication is by using private NPM packages. NodeJS and the Node Package Manager let you create packages, making necessary code portable and easily accessible by other services.

By creating private NPM packages, the services in your system can efficiently employ the common code that they need to utilize. This not only reduces runtime, it also helps ensure that the stack is minimal, reliable, and consistent.

4. Over-Engineering

Problem

When all you have is a hammer, everything looks like a nail. Once you have seen the benefits that microservice architectures provide, it can be tempting to turn everything in your enterprise application into a microservice.

In practice, it isn’t a good idea to convert every last function and module into a microservice. Adding more microservices to your architecture may increase cost, and overengineering can quickly offset any savings gained by employing microservices judiciously. How many services are too many?

Solution

When creating microservices, careful consideration should be given to the advantages microservices will bring, weighing them against the costs involved in creating a microservice. Ideally, every service should have as broad a scope as possible while still getting the advantages of microservices.

If there isn’t a compelling reason to create a microservice, such as optimizing for scalability, data security, or team ownership, a service should not be created.You know you have hit too many services when costs begin to inflate and when managing the system starts to become more complex than managing a more monolithic system.

5. Breaking Changes

Problem

While microservice architectures are generally robust to failure due to their modularity, one drawback is that changes in one service can end up breaking services downstream from it. Given that some services depend on other services to operate, how can you ensure that a change to one service doesn’t break other services?

Solution

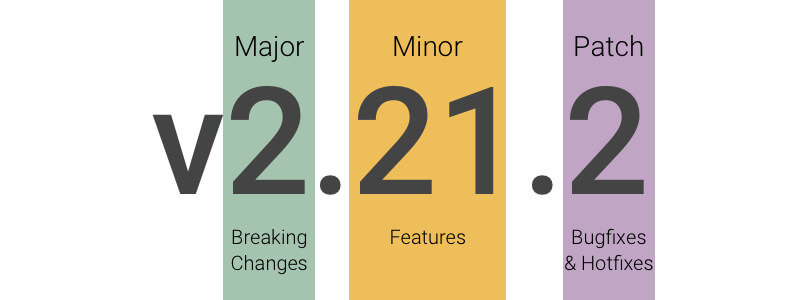

The best way to avoid changes that break a microservice architecture is to employ service versioning. Individual APIs should be version controlled. Proper service versioning ensures that each service has a working version of the code, and that version only needs to be updated when a breaking change is made. Minor changes to the service do not require version changes.

When a breaking change is made, the downstream endpoints can be checked for consistency with the new upstream endpoints. If the downstream endpoints have been impacted, their versions can be updated. Versioning enables you to keep changes to a minimum by changing only services that will cease to function when an upstream service is changed.

DevOps Considerations: Infrastructure Explosion

Problem

While we have covered the primary issues associated with utilizing microservices, there are additional considerations that should be evaluated when creating microservice architectures. The following issue is more of a DevOps issue than a problem directly related to microservices, but it’s still worth covering.

Using a large number of microservices can lead to infrastructure explosions. Every microservice created requires network and application services, and scaling domains become necessary as a result. The system has to be automated and orchestrated using cloud tools and technologies. So how can you manage deployments and maintenance with increased infrastructure demands?

Solution

Infrastructure demands can be addressed with three tools:

- CI/CD Pipelines

- Infrastructure-as-code/Declarative Infrastructure

- Container Orchestration

The best way to manage and maintain deployments is with containers and automation pipelines. Properly configured CI/CD pipelines let individual microservices build and deploy without affecting other services, and microservice teams can update/deploy without waiting for other teams to complete their work. A robust CI/CD pipeline ensures reliability and velocity.

Microservices can be defined using infrastructure-as-code and declarative infrastructure techniques. Tools like Terraform and CloudFormation can be used to automate cloud resource components of microservices, while code standards like YAML and JSON allow other microservice components to be automated.

Declarative infrastructure makes infrastructure development its own, independent task, and it can be used to reliably and efficiently provide microservices access to external tools like Redis and BigTable.

Containerized services can be managed with either Docker Compose or Kubernetes. These services are designed to enable the quick deployment of microservices, configuring the microservices and then deploying them with a single command. Docker Compose is designed to run on single hosts or clusters, while Kubernetes is designed to incorporate multiple cloud environments and clusters.

Conclusion

Overall, while microservice architectures are incredibly useful structures that can improve the reliability of your applications, they need to be created and designed with care in order to avoid the pitfalls mentioned above. Bitovi can help you with the DevOps aspects of your NodeJS applications, enhancing your automation pipelines to ensure your microservices operate optimally.

Previous Post

.png)