Chat with Us!

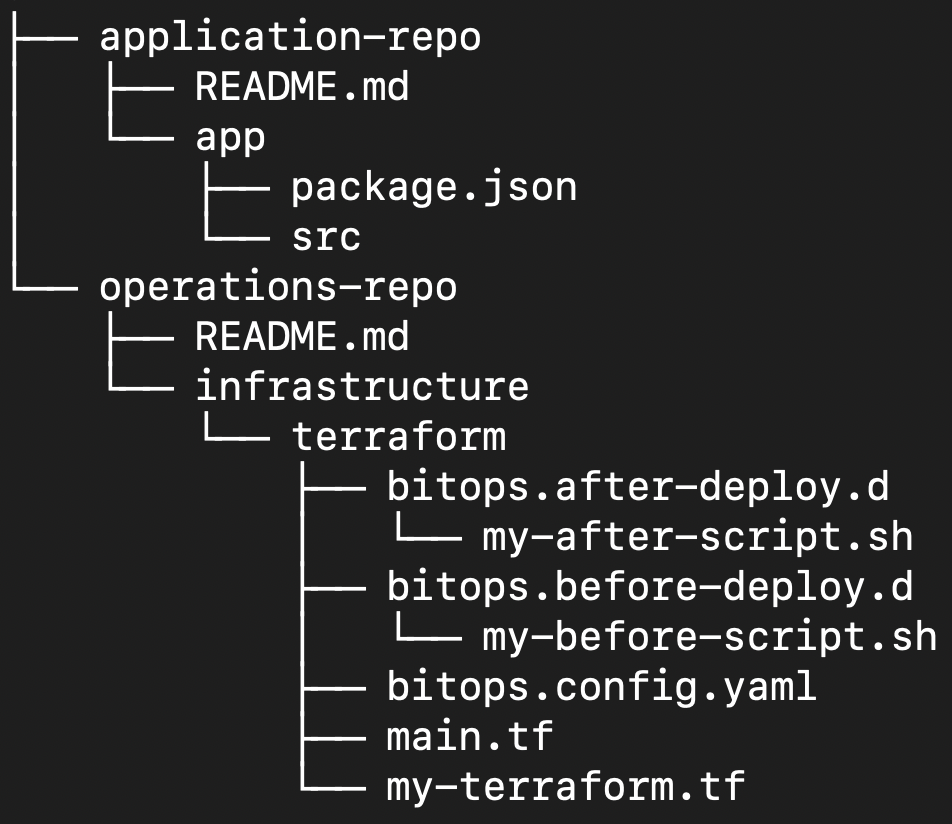

We answer every question on our Slack channel, if you have any questions about what BitOps can do for you, or transitioning your code to a Operations Repo, please don't hesitate to reach out. Or simply follow us on Twitter or Github for the latest updates!